|

I am a PhD student with major in Computer Science at the University of South Florida (USF). My interests are in Continual Learning and Neuro-mimetic AI. My research is at the intersection of Representation Learning, Associative memory and Predictive Coding. At USF, I am advised by Dr. Ankur Mali and am a member of the TKAI lab. I finished my MS in Computer Science from the Rochester Institute of Technology (RIT) with Dr. Alexander Ororbia. Email / CV / Google Scholar / Github / Linkedin / ORCiD / Blog |

|

|

I am interested in building an intelligent system that is evolving in nature and which reuses its current knowledge to learn new tasks. My current research revolves around building neural training techniques that work better than Backpropagation across domains. |

|

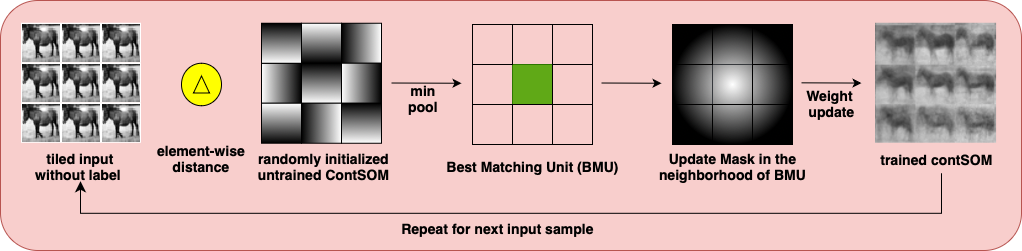

Hitesh Vaidya, Travis Desell, Ankur Mali, Alexander Ororbia Preprint pdf / arxiv / bibtex CSOM, a competitive learning based neural model that reduces catastrophic forgetting in a truly unsupervised online class incremental learning setting. We show with a theoritical proof that CSOM converges steadily to a stable model that stores linearly separable clusters of input samples without needing any class labels or task boundaries. |

|

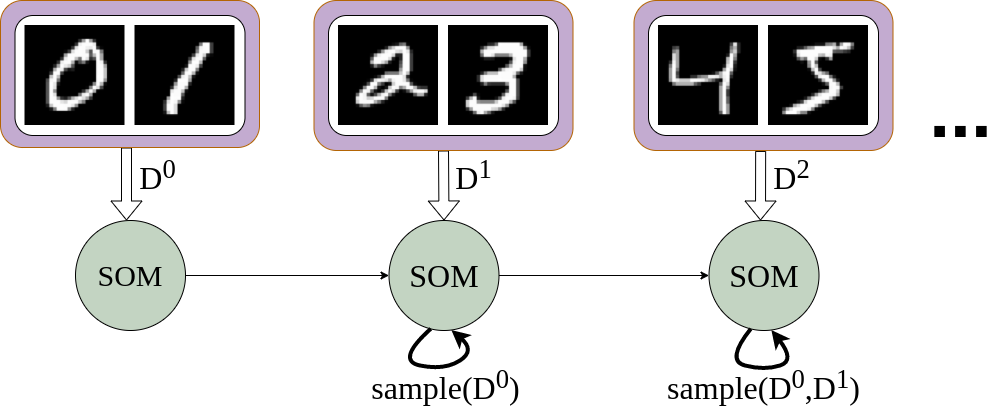

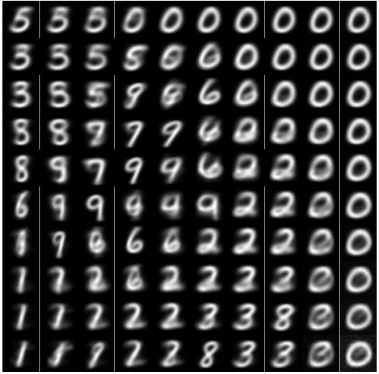

Hitesh Vaidya, Travis Desell, Alexander Ororbia AAAI-22 Student Abstract and Poster Program pdf / arxiv / bibtex In this work, we propose the c-SOM (continual SOM), an SOM that actively reduces the amount of forgetting it experiences. Specifically, we modify the SOM’s decay to be task-dependent and extend its units to self-induce a form of generative rehearsal to improve memory retention. |

|

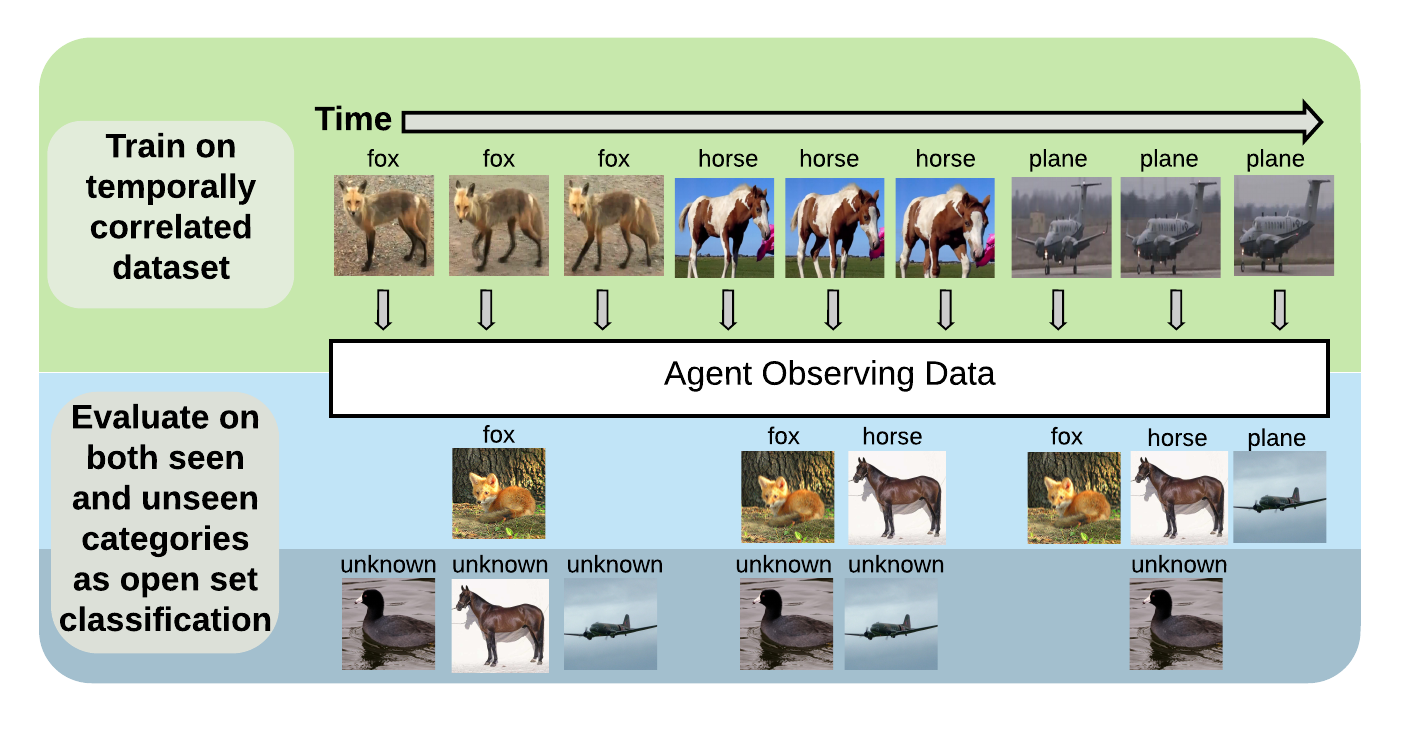

Ryne Roady, Tyler Hayes, Hitesh Vaidya, Christopher Kanan CVPR Workshop on Continual Learning, 2020 Video / code / bibtex In this work, we introduce Stream-51, a new dataset for streaming classification consisting of temporally correlated images from 51 distinct object categories and additional evaluation classes outside of the training distribution to test novelty recognition. We establish unique evaluation protocols, experimental metrics, and baselines for our dataset in the streaming paradigm. |

|

|

|

Hitesh Vaidya pdf / bibtex This is my MS Thesis in which I provide a survey about lifelong/continual learning. I mention about current methods, applications and limitations in lifelong learning. Further, I show that unsupervised learning approaches like self-organizing maps (SOMs) can be used as oracle to perform replay in lifelong learning neural networks. In the process, I display several novel methods to reduce forgetting in class incrementally trained SOMs and provide relevant baselines. |

|

|

|

USF

|

|

RIT

|

|

My life in Rochester, NY was really memorable because my friends. I like exploring new things in life and road trips are one of the best ways to do that in my opinion. Badminton and running are my all time favorites besides swimming and weight training. When indoors, I like doing Yoga, reading and listening to good music (I have a wide range of genres from Indian classical to Pop, R&B). I can play a bit of Tabla and flute too. |

|

|